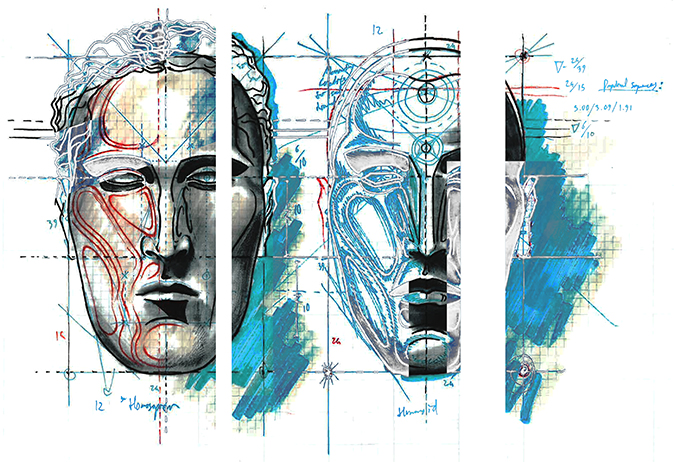

David Gondek and The Art of Artificial Intelligence

Me: (pressing the iPhone home button) Who is ELIZA?

Siri: She was my first teacher!

Me: Who is ELIZA?

Siri: I learned a lot from ELIZA but she was being a big negative.

Me: Siri, who is ELIZA?

Siri: She is my good friend. She was a brilliant psychiatrist, but she’s retired now.

Siri speaks respectfully. How else would she talk about her ancestors? ELIZA, an early example of natural language processing, predates Siri by 40 years. Similar to Siri, ELIZA is a chatbot program designed to emulate intelligent conversation. One main difference is ELIZA acts like a psychotherapist, answering questions with questions, while Siri is a personal assistant, more like our slave.

SAIC’s first Scientist-In-Residence, David Gondek demonstrated how Siri’s intelligence, like ELIZA, still has limitations, but with more sass.

Gondek: Siri, I am happy.

Siri: That makes Siri happy.

Gondek: I am not happy.

Siri: Excellent!

Siri has no empathy because as a simple rule-based system, Siri isn’t programed to understand the negation between “happy” and “not happy.”

Gondek is a computer scientist trained in natural language processing and machine learning, a research branch of artificial intelligence (AI). He says it’s a simple fix to write another algorithm to revise Siri’s compassion. However, there’s too much emotional content in the English language for machines to translate it into emotional intelligence. Siri can do specialized things, but handling human emotions is not one of them. “With computer learning, it’s like learning another language from a completely different universe,” says Gondek.

He was a leading researcher on the IBM team that developed Watson — the smart AI supercomputer that won the game show Jeopardy! in 2011. Now he is working with SAIC artists in his course, Algorithms, Information, and AI. Students are examining information retrieval, data collection, sentiment detection (using words to tell if someone is being positive, negative or neutral) and the challenges of machine translation in translating from one human language to another (i.e., Google Translate).

AI is a hot topic in mainstream culture—consider the recent Spike Jonze sci-fi film Her. Joaquin Phoenix’s character falls in love with the artificially intelligent operating system in his smart phone. The female voice, Scarlett Johansson, works like Siri, but develops more human consciousness, allowing us to wonder about the future of AI in our society.

“Very early on when computer science began, scientists focused on problems that were really hard for people to solve,” says Gondek. The 1956 AI Conference at Dartmouth College was a seminal event in the history of AI research. Scientists were interested in how machines learned, used language and recognized patterns. At the time, AI also focused on the game of chess for its association with human problem-solving.

Instead of focusing on what’s hard for humans, research is shifting towards improving the computer’s ability to emulate what is easy for humans. “Now we talk about recognizing images of cats versus dogs and detecting sentiment, whether someone is happy or sad. The focus of algorithms has changed, which recognizes how computers work very differently with humans. What’s very simple and natural for us is very hard for a machine.” But do we want machines to have feelings? Do we want them to completely imitate us?

To begin examining sentiment detection in computers, Gondek’s students began with a basic test for machine intelligence, similar to the original 1950s Turing Test developed by Alan Turing, who posed the question: “Can machines think?” Gondek and his students are asking their own questions. How does sentiment work in machines? If we say something positive, does it guarantee a positive response?

They began their experiment by looking at a photo of a crying woman. If a computer studied the image, it would recognize sadness. Its programmed algorithm equates tears with unhappiness. But there’s an added curveball. The crying woman wore a bridal gown, so maybe these were tears of happiness. Without common sense, the computer can’t comprehend the added contextual information, further separating humans and machines.

Understanding the primitive building blocks of AI — computational linguistics, linguistic processing and sentiment detection — gives students the freedom to use AI as a medium for their own projects. Anthony Ladson, BA senior in Visual and Critical Studies, is developing a program to translate between English and Yoda. Gill Park, BFA senior, is analyzing the sentiment of tweets based on their location in Chicago, detecting the moods of neighborhoods, positive or negative, and outputting this information as a weather map. His big question is: how can we map our happiness?

AI researchers are not trying to replicate the complete human experience. Even though we can train a computer to analyze tweets to recognize if people are saying good things or bad things (sentiment detection), computer comprehension is not equivalent to human comprehension.

Advertisers can use sentiment detection to gather proprietary information. Thankfully, advertisers cannot read our minds, but by analyzing our social media opinions on Facebook or Twitter, advertisers can find ways to better endorse a product by tugging at our heartstrings to coax us to click on an ad. Google knows what we are searching for, the same way Amazon remembers our purchases, and Facebook knows who our friends are and how to market ads at us.

“These are excellent examples of a machine learning our thought patterns. It can’t immediately read our minds, but it’s trying to.” Gondek says, though, we shouldn’t fear robots overpowering humans, because machines have no agency. What we should fear is our own trust in the computers. “As the algorithms get better, they can become good enough where you trust the output.”

This post is worth everyone’s attention. How can I find out

more?